The real pain in web scraping isn’t collecting data. It’s keeping that collection stable once you hit production. Blocking, rate limiting, CAPTCHAs, and dynamic JavaScript sites can break your parser every other week.

Scraping APIs act as infrastructure. They handle the hard parts so you don’t have to. Below are five APIs that are actually used in production. Not demos or side projects, but real tools for real workloads.

Top Web Scraping APIs for Production Use

The market splits into three camps. API-first services keep things simple. AI-first tools handle extraction for you. Enterprise solutions rely on large proxy networks to maintain access.

Each approach fits different use cases. Small teams need low friction. Large companies need reliability at scale. The list below covers tools used in production across all three categories.

1. HasData

HasData takes an AI-first approach to web scraping with the HasData Web Scraping API. The service focuses on LLM pipelines and structured data rather than raw HTML. You don’t get messy markup back. You get clean JSON or Markdown ready for ingestion. This removes a ton of parsing work from your backend team. The infrastructure is managed and scales automatically. No manual proxy tweaking. No browser automation scripts to debug.

Best fit for AI pipelines and structured data extraction

For AI workloads, output format matters more than anything. HasData delivers clean data without you writing selectors or maintaining parsers. RAG systems need fresh content. Training pipelines need structured datasets. This API handles both. The extraction happens server-side with automatic anti-bot bypass and JavaScript rendering.

Key capabilities include:

- AI-based extraction without writing manual selectors;

- LLM-ready Markdown and structured JSON output;

- Automatic proxy rotation and anti-bot bypass;

- JavaScript rendering for dynamic single-page websites;

- High-volume scraping with fully managed infrastructure.

The result is less development overhead and faster time-to-market for AI features.

2. Diffbot

Diffbot uses computer vision and NLP to understand web pages. The system doesn’t just scrape. It reads. It identifies article text, product details, people, companies, and relationships between them. This goes way beyond traditional parsing. You point it at a URL, and Diffbot returns a structured knowledge graph. No rules. No XPath. Just data.

Best fit for automated knowledge extraction and AI datasets

Building knowledge graphs manually is a nightmare. Diffbot automates the entire extraction pipeline. The service powers AI datasets for companies that need entity recognition and relationship mapping at scale. It’s particularly strong for news, e-commerce, and business data.

Key capabilities include:

- AI-based page understanding using computer vision;

- Automatic extraction of entities and relationships;

- Structured knowledge graph generation ready for AI;

- No need for manual scraping rules or selectors;

- Support for large-scale data processing across millions of pages.

This drastically cuts your dependency on manual configuration and maintenance.

3. Import.io

Import.io targets the enterprise market with visual extraction tools. You don’t write code. You point and click on the data you want. The system learns the pattern and builds an extraction pipeline automatically. This makes it accessible to non-technical teams who still need reliable data from websites.

Best fit for enterprise data extraction without engineering overhead

Many companies lack a dedicated scraping team. Import.io solves that problem. Business analysts can set up data extraction workflows without waiting for engineering resources. The platform runs in the cloud and handles scheduling, monitoring, and delivery.

Key capabilities include:

- Point-and-click data extraction workflows for non-developers;

- Automated dataset generation from any website;

- Cloud-based scraping infrastructure with no setup;

- Scheduling and monitoring tools for production pipelines;

- Integration with business intelligence systems like Tableau.

This lowers the entry barrier dramatically. You don’t need a backend team to start scraping.

4.WebScrapingAPI

WebScrapingAPI keeps things simple. It’s an API-first service built for quick integration. Send a request and get HTML back. The service handles proxy rotation and anti-bot measures behind the scenes. There is no complex configuration or infrastructure to manage. This makes it a practical option for teams that want to start collecting data without dealing with setup overhead.

Best fit for simple API-based scraping with minimal setup

For smaller projects or teams that just need basic scraping, this works. You don’t want to spend days tuning proxy pools. You want data. Fast. WebScrapingAPI delivers that with a straightforward REST endpoint and reasonable reliability.

Key capabilities include:

- Simple REST API for quick integration in any language;

- Built-in proxy rotation across different IP types;

- Anti-bot handling without any configuration from you;

- Support for dynamic content rendering when needed;

- Scalable request handling from dozens to thousands of calls.

It’s a basic but reliable tool. Good for getting started. Good for teams that don’t need AI extraction or enterprise scale.

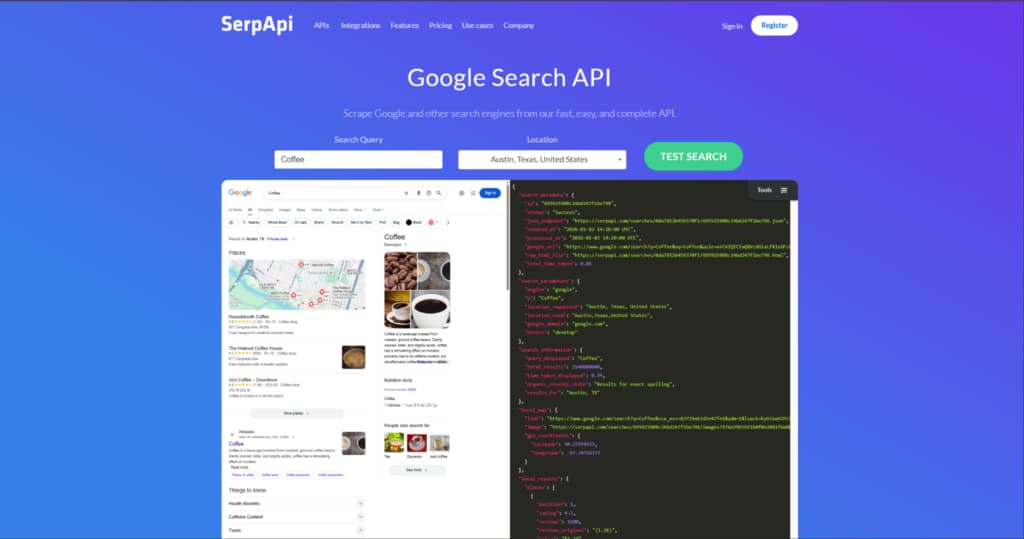

5. SerpApi

SerpApi specializes in search engine results pages. Google, Amazon, Bing, YouTube. The service returns structured data for search queries, ads, product listings, and maps. This is tricky to do yourself because search engines aggressively block scrapers.

Best fit for search engine and SERP data extraction

If you need to monitor search rankings or track product prices across marketplaces, SerpApi is the obvious choice. The service maintains parsers for dozens of search engines. You just send a query and get clean JSON back. It’s reliable and built for real-time data retrieval.

Key capabilities include:

- Structured search engine results data without parsing headaches;

- Support for Google, Amazon, Bing, YouTube, and other SERPs;

- Real-time data retrieval with low latency;

- High reliability for search queries that typically get blocked;

- Easy API integration with minimal setup.

The narrow focus is a strength here. SerpApi does one thing well, and that’s SERP extraction.

How to Choose the Right Web Scraping API

Your use case decides the tool. AI pipelines need structured output like JSON or Markdown. SERP monitoring needs specialized APIs like SerpApi. Enterprise teams might prioritize proxy network size. Small teams often just need something simple and cheap.

Don’t overbuy. Don’t underbuy. Match the tool to the problem.

When choosing a web scraping API, focus on:

- Data output format and structure (raw HTML vs clean JSON);

- Anti-bot capabilities for your specific target sites;

- Scalability and performance under production load;

- Integration with your existing systems and workflows;

- Ease of use and developer experience for your team.

Get this right, and scraping becomes boring. Boring is good in production.

Final Thoughts

Web scraping APIs have become essential infrastructure. The right one saves you weeks of debugging proxy rotations and parser failures. The wrong one creates more problems than it solves. Match the tool to your actual production needs. Start small, test thoroughly, and scale once you’re confident in stability. Over time, the quality of your scraping layer will directly impact how reliable your entire data pipeline becomes.